Logstash is written on the JVM and consumes 500–800 MB of memory just on startup. On a VPS with 2–4 GB RAM that is a third of the resources spent purely on log collection. Vector is written in Rust, starts in a second, and uses 20–50 MB in a basic configuration. It matches Logstash functionally — accepts logs from any source, transforms them, and ships them anywhere.

Installation

Debian / Ubuntu — official script:

curl -1sLf 'https://repositories.timber.io/public/vector/cfg/setup/bash.deb.sh' | sudo bash

sudo apt install vector

Check version and status:

vector --version

sudo systemctl status vector

Configuration file:

sudo nano /etc/vector/vector.yaml

After changes — validate and restart:

vector validate /etc/vector/vector.yaml

sudo systemctl restart vector

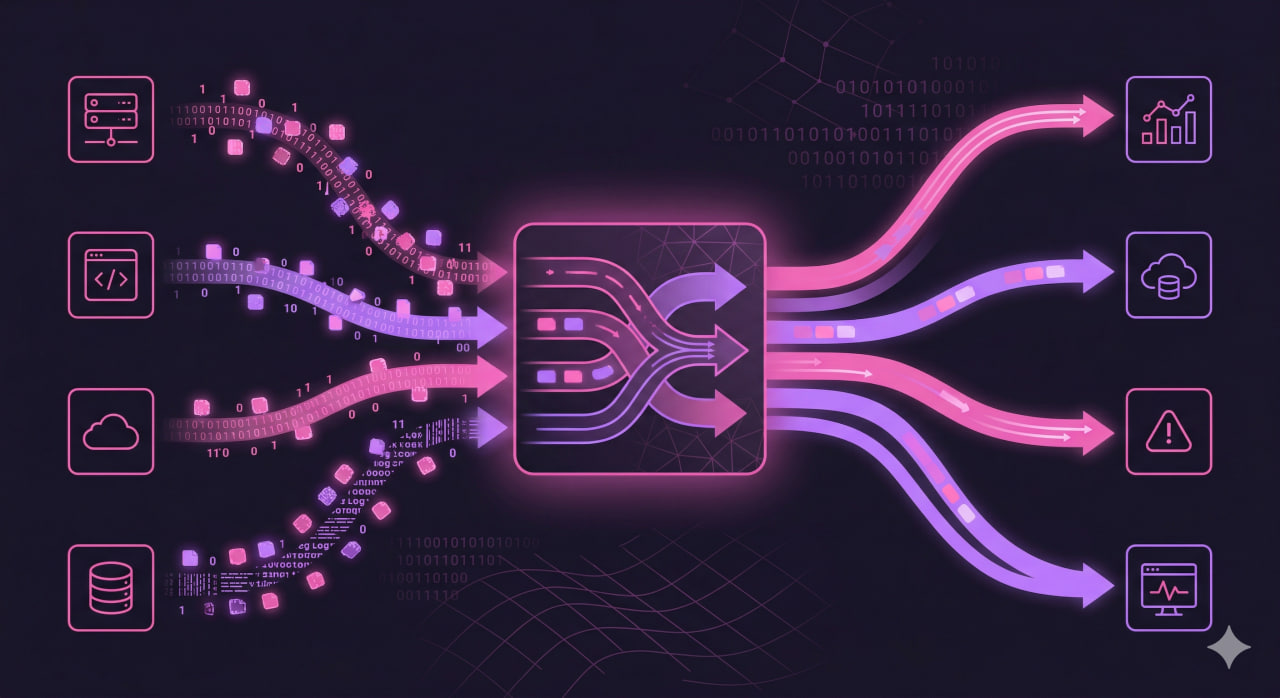

Concept: sources → transforms → sinks

Every Vector pipeline consists of three component types:

Sources — where to read logs from. Files, systemd journal, syslog, stdin, Kafka, HTTP.

Transforms — what to do with events. Parse, filter, rename fields, add metadata.

Sinks — where to send. Files, Loki, Elasticsearch, S3, ClickHouse, HTTP, stdout.

Each component gets a unique ID. Sinks declare inputs — which components to pull data from. This builds a processing graph.

Simplest Example: Read a File, Write to Another

sources:

app_logs:

type: file

include:

- /var/log/myapp/*.log

read_from: beginning

sinks:

output_file:

type: file

inputs:

- app_logs

path: /var/log/vector/myapp-%Y-%m-%d.log

encoding:

codec: text

Read From systemd Journal

Source for system logs — all services through journald:

sources:

journal:

type: journald

include_units:

- nginx

- php8.1-fpm

- postgresql

Without include_units — reads the entire journal. On a busy server that is a lot.

Parsing the Nginx Access Log

Nginx writes logs in a text format. Vector can parse them into structured fields using VRL (Vector Remap Language):

sources:

nginx_access:

type: file

include:

- /var/log/nginx/access.log

transforms:

parse_nginx:

type: remap

inputs:

- nginx_access

source: |

. = parse_nginx_log!(string!(.message), "combined")

sinks:

parsed_logs:

type: file

inputs:

- parse_nginx

path: /var/log/vector/nginx-%Y-%m-%d.json

encoding:

codec: json

After parsing each event contains fields: client, method, path, status, size, referrer, agent.

VRL: The Transformation Language

VRL (Vector Remap Language) is a built-in language for working with events. Syntax resembles Python but is type-safe.

Add a hostname field:

source: |

.hostname = get_hostname!()

Rename a field:

source: |

.ip = del(.client_addr)

Filter only errors (drop everything except 4xx and 5xx):

source: |

if !starts_with(to_string!(.status), "4") && !starts_with(to_string!(.status), "5") {

abort

}

abort in VRL stops event processing — it never reaches the sink.

Parse JSON from a field:

source: |

.parsed = parse_json!(.message)

.level = .parsed.level

.msg = .parsed.msg

Sending to Loki

sinks:

loki:

type: loki

inputs:

- parse_nginx

endpoint: http://localhost:3100

labels:

app: nginx

env: production

host: "{{ hostname }}"

encoding:

codec: json

Labels are the key to Loki performance. Keep them few (3–5) with low cardinality. Never use IP address or user-agent as a label — that creates millions of streams.

Sending to Elasticsearch

sinks:

elasticsearch:

type: elasticsearch

inputs:

- parse_nginx

endpoints:

- http://localhost:9200

index: nginx-logs-%Y.%m.%d

auth:

strategy: basic

user: elastic

password: "${ES_PASSWORD}"

Environment variables via ${} — passwords are not stored in plain text in the config.

Routing: Different Logs to Different Destinations

One source, multiple destinations based on content — using the route transform:

transforms:

router:

type: route

inputs:

- all_logs

route:

errors: '.level == "error" || .level == "critical"'

info: '.level == "info" || .level == "debug"'

sinks:

errors_to_loki:

type: loki

inputs:

- router.errors

endpoint: http://localhost:3100

labels:

severity: error

info_to_file:

type: file

inputs:

- router.info

path: /var/log/vector/info-%Y-%m-%d.log

encoding:

codec: text

Aggregating Metrics From Logs

Vector can count metrics directly from logs and expose them to Prometheus:

transforms:

count_errors:

type: log_to_metric

inputs:

- parse_nginx

metrics:

- type: counter

field: status

name: nginx_requests_total

tags:

status: "{{ status }}"

method: "{{ method }}"

sinks:

prometheus:

type: prometheus_exporter

inputs:

- count_errors

address: 0.0.0.0:9598

Prometheus scrapes http://server:9598/metrics and gets nginx_requests_total by status and method — no separate nginx-exporter needed.

Debugging: See What Flows Through the Pipeline

Output events to stdout during config development:

sinks:

debug:

type: console

inputs:

- parse_nginx

encoding:

codec: json

Built-in tap — watch a live event stream through a specific component:

vector tap parse_nginx

Comparison With Logstash and Fluentd

| Parameter | Vector | Logstash | Fluentd |

|---|---|---|---|

| Language | Rust | Java | Ruby |

| RAM at startup | ~20–50 MB | ~500–800 MB | ~50–100 MB |

| CPU at idle | minimal | high | low |

| Transform language | VRL | Grok + Ruby | Fluent DSL |

| Performance | very high | medium | high |

| Metrics from logs | built-in | plugin | plugin |

On a VPS with limited resources Vector is the obvious choice.

Quick Reference

| Task | Command / config |

|---|---|

| Install | sudo apt install vector |

| Validate config | vector validate /etc/vector/vector.yaml |

| Watch live stream | vector tap component_name |

| Source — file | type: file + include: |

| Source — journald | type: journald + include_units: |

| Parse Nginx | parse_nginx_log!(string!(.message), "combined") |

| Filter in VRL | if condition { abort } |

| Routing | type: route + named routes |

| Metrics from logs | type: log_to_metric |

| Prometheus exporter | type: prometheus_exporter |