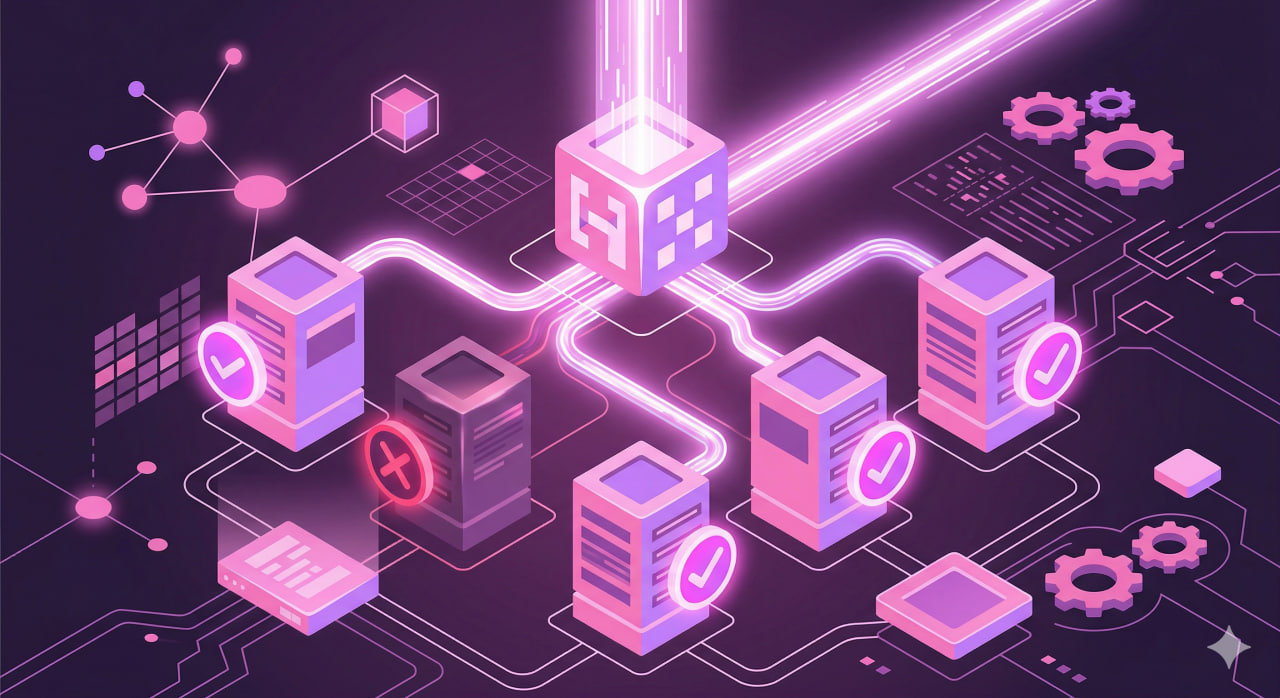

Running three VPS instances without automation is a stress test you will eventually fail. A server drops at 2am, a third of your traffic hits a dead host, and users get errors while you are asleep. HAProxy exists to remove you from that equation. It sits in front of your servers, probes each one on a configurable schedule, and pulls failing hosts from the rotation before any user request reaches them - no manual intervention, no error absorbed by a real visitor. Beyond failover, it handles HTTP, HTTPS, and raw TCP, routes requests by URL path or request headers, and surfaces a live dashboard showing the real-time state of every node in your cluster.

Nginx has an upstream block and can distribute load, but the free version only marks a backend as down after a real request fails - meaning one user absorbs the error before the host is removed from rotation. There is no built-in stats dashboard, and ACL-based routing beyond basic patterns requires paid modules. HAProxy's health checks run continuously in the background, independent of user traffic, on whatever interval you set. The first visitor to hit a failing backend does not get the error - HAProxy has already removed that server. In anything resembling a production setup, that distinction matters.

Installing on Ubuntu 22.04

HAProxy is available in Ubuntu's default package repositories. For most setups, the packaged version covers everything you need:

apt update

apt install haproxy -y

haproxy -vIf you need a newer release with HTTP/3 support or specific features, use the official PPA:

add-apt-repository ppa:vbernat/haproxy-2.8

apt update

apt install haproxy=2.8.\*The service starts automatically after installation. All configuration lives in a single file: /etc/haproxy/haproxy.cfg.

Config file structure

HAProxy's configuration is organized into four named sections. Understanding what each one does is more useful than memorizing syntax.

The global block configures the process itself - how many CPU threads to use (nbthread), where to expose the management socket (stats socket), which system user to run under. For most VPS setups, the defaults that ship with the Ubuntu package are fine. The one parameter worth adjusting is maxconn - the ceiling on simultaneous connections. With 2 GB of RAM, a safe value is somewhere between 10,000 and 15,000, depending on your workload.

The defaults block sets values inherited by every frontend and backend that follows it. The three timeouts here matter most in practice: connect (how long to wait for a TCP connection to a backend), client (how long to wait for data from a client before closing), and server (how long to wait for a response from a backend). A value set in defaults applies everywhere unless a specific section overrides it.

The frontend block is where incoming traffic enters. It specifies the IP and port HAProxy listens on, any conditions to evaluate, and which backend pool to send matching traffic to.

The backend block defines the actual server pool - the list of upstream hosts, the balancing algorithm, and how health checks should be performed. A single frontend can route to multiple backends depending on what conditions are matched.

HTTP load balancing across three VPS

Say you have three backend servers at 10.0.0.1, 10.0.0.2, and 10.0.0.3, each listening on port 8080. HAProxy accepts traffic on port 80 and distributes requests across the pool:

frontend http_in

bind *:80

default_backend app_servers

backend app_servers

balance roundrobin

option httpchk GET /health

http-check expect status 200

server app1 10.0.0.1:8080 check inter 10s fall 3 rise 2

server app2 10.0.0.2:8080 check inter 10s fall 3 rise 2

server app3 10.0.0.3:8080 check inter 10s fall 3 rise 2The health check parameters work as a system for filtering out unstable hosts. Three consecutive failed probes mark a server as DOWN - enough to distinguish a real problem from a momentary blip. Getting back into rotation requires two consecutive clean checks, a deliberately lower bar than the threshold for removal, so recovered servers rejoin promptly without risking a flapping host. The 10-second interval means a newly failed server is detected within 30 seconds, and since probes run in a separate thread, this never affects request handling.

Beyond roundrobin, which cycles through servers in equal turns, two other algorithms are worth knowing. leastconn routes each new request to whichever server currently has the fewest open connections - useful when request processing time varies widely, since long-running jobs will not artificially pile up on one node. source hashes the client's IP address to a specific server, so all connections from the same source always land on the same node as long as it stays available.

Rate limiting with stick tables

Stick tables let HAProxy track per-client data in memory and act on it at the frontend, without any external component. A practical use case is blocking clients that send too many requests within a short window - useful for protecting APIs from abusive clients or absorbing the early stage of a request flood before it reaches your backends.

frontend http_in

bind *:80

stick-table type ip size 100k expire 1m store http_req_rate(60s)

http-request track-sc0 src

http-request deny deny_status 429 if { sc_http_req_rate(0) gt 100 }

default_backend app_serversThe table stores entries keyed by source IP, holds up to 100,000 records, and automatically removes entries that have been inactive for one minute. The track-sc0 src directive ties each incoming request to its corresponding table entry and increments the counter. Any IP that exceeds 100 requests within a 60-second rolling window is denied with a 429 Too Many Requests response. The threshold is something you tune to your traffic profile: 100 per minute is aggressive for a public-facing page and lenient for an authenticated API endpoint with rate limits built in.

Session persistence for stateful applications

Some applications store session state in local process memory and break when requests scatter across different servers - the session lives on one host while the next request lands on another. HAProxy handles this with cookie-based persistence:

backend app_servers_sticky

balance roundrobin

cookie SERVERID insert indirect nocache

option httpchk GET /health

http-check expect status 200

server app1 10.0.0.1:8080 check inter 10s fall 3 rise 2 cookie app1

server app2 10.0.0.2:8080 check inter 10s fall 3 rise 2 cookie app2

server app3 10.0.0.3:8080 check inter 10s fall 3 rise 2 cookie app3HAProxy inserts a SERVERID cookie into the first response with a value like app1. On subsequent requests it reads the cookie and sends the client to the same server. If that server goes down, the client is transparently moved to another and receives a new cookie value.

For cases where cookies are not an option, stick tables can pin clients by source IP instead:

backend app_servers_sticky_ip

balance roundrobin

stick-table type ip size 100k expire 30m

stick on src

server app1 10.0.0.1:8080 check inter 10s fall 3 rise 2

server app2 10.0.0.2:8080 check inter 10s fall 3 rise 2

server app3 10.0.0.3:8080 check inter 10s fall 3 rise 2ACL routing: /api to one pool, everything else to another

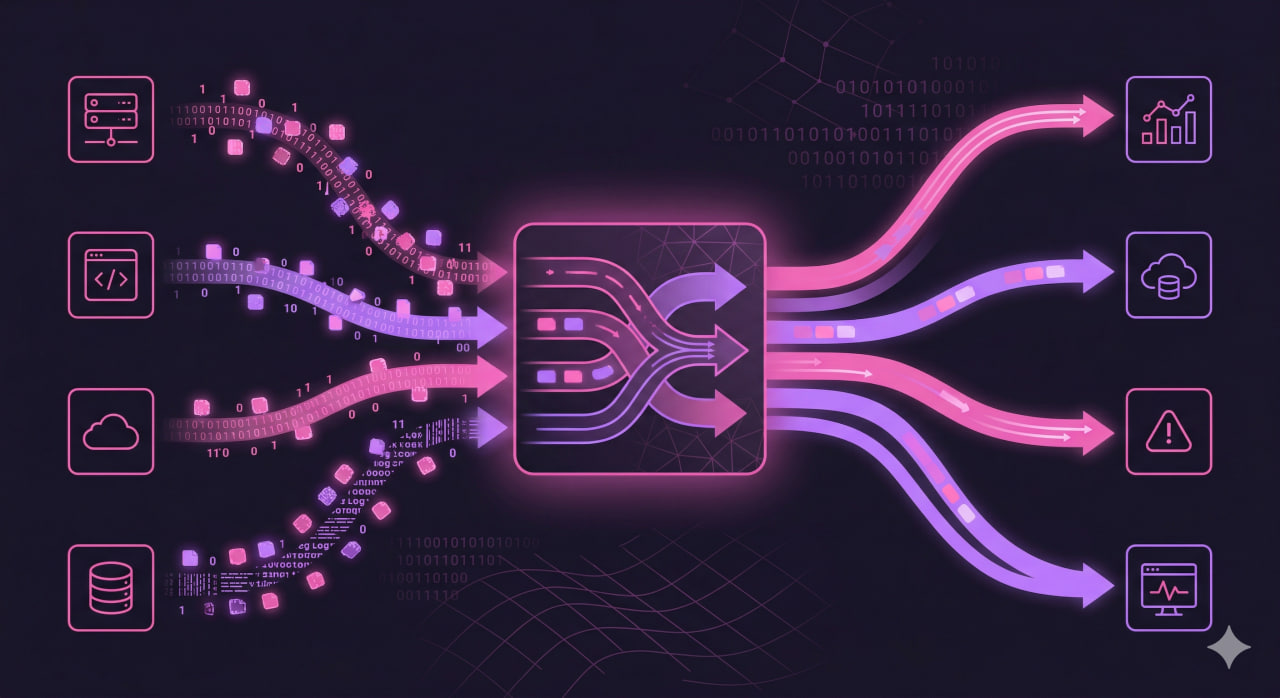

ACLs let you split traffic at the frontend based on URL path, request headers, HTTP method, or other attributes and direct it to different backend pools:

frontend http_in

bind *:80

acl is_api path_beg /api

use_backend api_servers if is_api

default_backend app_servers

backend api_servers

balance leastconn

option httpchk GET /api/health

http-check expect status 200

server api1 10.0.1.1:9000 check inter 10s fall 3 rise 2

server api2 10.0.1.2:9000 check inter 10s fall 3 rise 2

backend app_servers

balance roundrobin

option httpchk GET /health

http-check expect status 200

server app1 10.0.0.1:8080 check inter 10s fall 3 rise 2

server app2 10.0.0.2:8080 check inter 10s fall 3 rise 2

server app3 10.0.0.3:8080 check inter 10s fall 3 rise 2The condition path_beg /api matches any URL that starts with /api. Multiple ACLs can be combined - you can require both a path pattern and a specific HTTP method before a request routes to a given pool, or split traffic by the Host header to serve several domains from a single HAProxy process without separate instances.

Stats page

HAProxy includes a built-in web dashboard that shows live metrics for every server and backend pool in your configuration. Add a listen block to enable it:

listen stats

bind *:8404

mode http

stats enable

stats uri /stats

stats realm HAProxy\ Statistics

stats auth admin:yourpassword

stats refresh 10s

stats show-node

stats show-legendsAfter reloading HAProxy, visit http://your-ip:8404/stats in a browser. The page shows current server state, active and queued connection counts, cumulative traffic volume, error rates, and average response time per backend. In practice, two signals are most useful: a sudden spike in request rate per second (often an early indicator of a bot wave or DDoS before any alert fires), and error counts climbing on one specific backend while the others stay flat - that pattern typically points to a backend failing on a particular request type rather than being completely unreachable. Port 8404 should not be exposed publicly - restrict it with a firewall rule and access it through an SSH tunnel when needed.

Applying config and checking status

Always validate the configuration file before reloading:

haproxy -c -f /etc/haproxy/haproxy.cfgReload without dropping existing connections:

systemctl reload haproxyCheck live server state through the admin socket:

echo "show info" | socat stdio /run/haproxy/admin.sock

echo "show servers state" | socat stdio /run/haproxy/admin.sockCommon issues

503 with an empty pool. This means HAProxy has taken every server in the backend offline simultaneously. The cause is usually not the servers themselves but a misconfigured health check - the /health endpoint may require authentication, return a redirect (301 or 302), or simply not exist. Check current server state:

echo "show servers state" | socat stdio /run/haproxy/admin.sockIf hosts show as DOWN, test the health endpoint directly from the HAProxy host, not from your workstation:

curl -v http://10.0.0.1:8080/healthIf the request fails or returns anything other than 200, the problem is in the backend application. HAProxy does not follow redirects during health checks by default, so a 301 to a login page will mark the host as down.

Health checks pass but real requests hang. A health endpoint that returns 200 on a static string tells HAProxy the host is reachable, nothing more. If the request handler has a slow database query, a blocked thread pool, or a dependency that is degraded but not down, HAProxy will keep routing traffic to it. Fix this by making your health endpoint do something meaningful - run a lightweight query, check a connection pool, or exercise a code path that would fail if a key dependency were unavailable.

Clients lose their session between requests. Cookie-based persistence or a stick table resolves this at the proxy level, as described above. A more robust long-term fix is making the application itself stateless by storing sessions in Redis or a shared database - at that point it stops mattering which backend handles each request.

HAProxy fails to start after a config change. Run the syntax check and read the output carefully:

haproxy -c -f /etc/haproxy/haproxy.cfgThe error message includes the exact line number and a description of what went wrong. The most common mistakes are missing colons in ACL definitions, unrecognized option names, and timeout values written without a unit suffix (use 5s, not 5).

When HAProxy fits and when it doesn't

HAProxy is a good fit when you have several VPS nodes running the same application and want precise control over how traffic reaches them - path-based routing, per-server metrics, configurable failure thresholds, and TCP-level balancing outside of HTTP. It handles high connection counts without meaningful performance degradation and behaves predictably under failure conditions - a property that is worth a lot when something breaks at an inconvenient hour.

If you are running a single server with Nginx and a few upstream services, the built-in upstream block handles it without adding another process to your stack. On Kubernetes, Ingress controllers and LoadBalancer services already manage traffic distribution - layering HAProxy on top adds complexity without benefit.

For a multi-VPS cluster managed directly, without an orchestration layer, HAProxy remains a practical default: minimal external dependencies, a configuration format close to plain English, and a long track record across a wide range of production workloads.